News Story

The brain makes sense of math and language in different ways

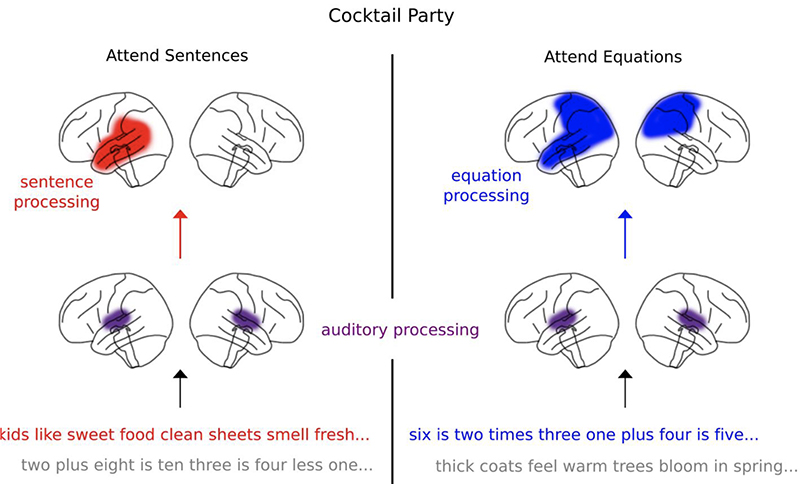

Schematic of cortical processing of sentences and equations. A schematic representation of sentence and equation processing is shown. Exemplars of both foreground and background of stimuli are shown at the bottom. The areas that were most consistent across all analysis methods (frequency domain, TRFs and decoders) are shown.

As it turns out, thinking about words and thinking about math are kind of, but not exactly, the same thing.

Human brains are able to comprehend and manipulate both words and numbers. While numerical operations may rely on language for precise calculations or share logical and syntactic rules with language, the neural basis of numerical processing is ultimately distinct from language processing. People use distinct, dedicated cortical networks to understand language or work through equations.

A new paper by a group of University of Maryland researchers in the Journal of Neuroscience determines that these cortical networks naturally segregate when listeners are asked to pay attention to either math or to language in a situation where both are present.

Cortical Processing of Arithmetic and Simple Sentences in an Auditory Attention Task was written by ECE Ph.D. students Joshua Kulasingham (the lead author), Neha Joshi, and Mohsen Rezaeizadeh; and Professor Jonathan Simon (ECE/ISR/Biology). Kulasingham is advised by Simon, while Joshi and Rezaeizadeh are advised by Professor Shihab Shamma (ECE/ISR).

How are math and language processing different?

Within the brain, cortical processing of arithmetic and of general language rely both on shared and task-specific neural mechanisms that come into play regardless of where the input comes from—whether listened to or read.

Comprehending and manipulating numbers and words are key aspects of human cognition and have a lot in common. Not surprisingly, both functions share common brain processing areas (e.g., the brain’s posterior parietal and prefrontal areas). But comprehending and manipulating numbers and words also differ in many respects, including in where their related brain activity occurs. For example, most language processing occurs in the brain’s left temporal lobe. In contrast, mathematical processing is more widespread in the brain: it occurs in the frontal, parietal, occipital and temporal lobes of both left and right hemispheres.

About the study

The new work is not the first study to address disentangling the brain’s processing of math from its processing of language, says Professor Simon. However, “the large majority of earlier studies have relied on the subjects reading, not listening, to the mathematical and language information. By having our subjects listen to the information, we could investigate the brain’s processing of math and language that was not tied to the brain’s processing of visual stimuli.”

Most studies also have used functional magnetic resonance imaging (fMRI) to measure brain activity. While this technology is very sensitive to the location of activity in the brain, it is insensitive to the temporal dynamics of that activity.

Listen to what the study subjects heard while in the MEG scanner, and see which parts of your brain are involved in processing each type of information.

Here, the researchers measured brain activity using magnetoencephalography (MEG), a non-invasive neuroimaging technology that employs sensitive magnetometer sensors to make high temporal precision recordings of the naturally occurring magnetic fields produced by the brain’s electrical currents.

Humans are able to selectively process speech sounds in noisy environments, following one stream of speech even in the midst of several conversations. This is known as the “cocktail party scenario.” In this study, the researchers scanned 22 subjects with MEG while they were completing a cocktail party listening task in which two auditory streams (a male and a female speaker) were presented at the same time.

“Because our subjects listened to the information instead of reading it, we could play both the mathematical and language information simultaneously, but ask them to only pay attention to one or the other at a time,” Simon notes.

“In this way we could more easily separate out which parts of the brain process the sound of the information—which was present for both the numbers and words—from the parts of the brain that process the information itself, which contains only arithmetic or only language, depending on which stream they are paying attention to.”

The neural responses recorded in the MEG data reflected the requests to pay attention to the language or to the arithmetic. The researchers determined that simultaneous neural processing of the acoustics of words in the spoken sentences and the symbols of the equations occurred in the auditory cortex. Neural responses to sentences and equations, however, were seen only when that stream was attended to, originating primarily from left temporal area of the brain in the case of sentences (consistent with how the brain processes language) and bilateral parietal areas for equations (consistent with how the brain processes arithmetic).

Responses also were correlated with task behavior, consistent with reflecting high-level processing for speech comprehension or correct calculations. The math stream contained incorrect equations, and the language stream included sentences that made no sense. When subjects noticed these, their brain activity appeared stronger in the specific brain areas that process that type of information.

In addition, the researchers found the target of attention—either general language or equations— could be decoded from MEG responses, especially in the left superior parietal areas of the brain.

It appears that neural responses to arithmetic and language are especially well segregated during the cocktail party scenario; the correlation with behavior suggests that they may be linked to successful comprehension or calculation.

The work shows that neural processing of arithmetic relies on dedicated, modality independent cortical networks that are distinct from those underlying language processing. These separate networks segregate naturally when listeners selectively attend to one type over the other.

Try an interactive exercise! Listen to what the study subjects heard while in the MEG scanner, and see which parts of your brain are involved in processing each type of information.

Original story by Rebecca Copeland at the University of Maryland Institute for Systems Research.

Published August 15, 2021